Code Review: Is CodeRabbit v1.8 the Messiah, or Just a Very Fluffy Bunny?

Ah, code review. That glorious crucible where dreams of clean code often go to die, or at least get extensively nitpicked. For generations, developers have endured this ritual, meticulously poring over diffs, debating semicolons, and occasionally discovering actual bugs. But lo, a new contender emerges from the digital ether: CodeRabbit v1.8, promising to revolutionize this age-old torment. Is it the enlightened path we’ve been seeking, or merely another tool destined to gather dust alongside our half-read programming books? Let’s plunge into the rabbit hole.

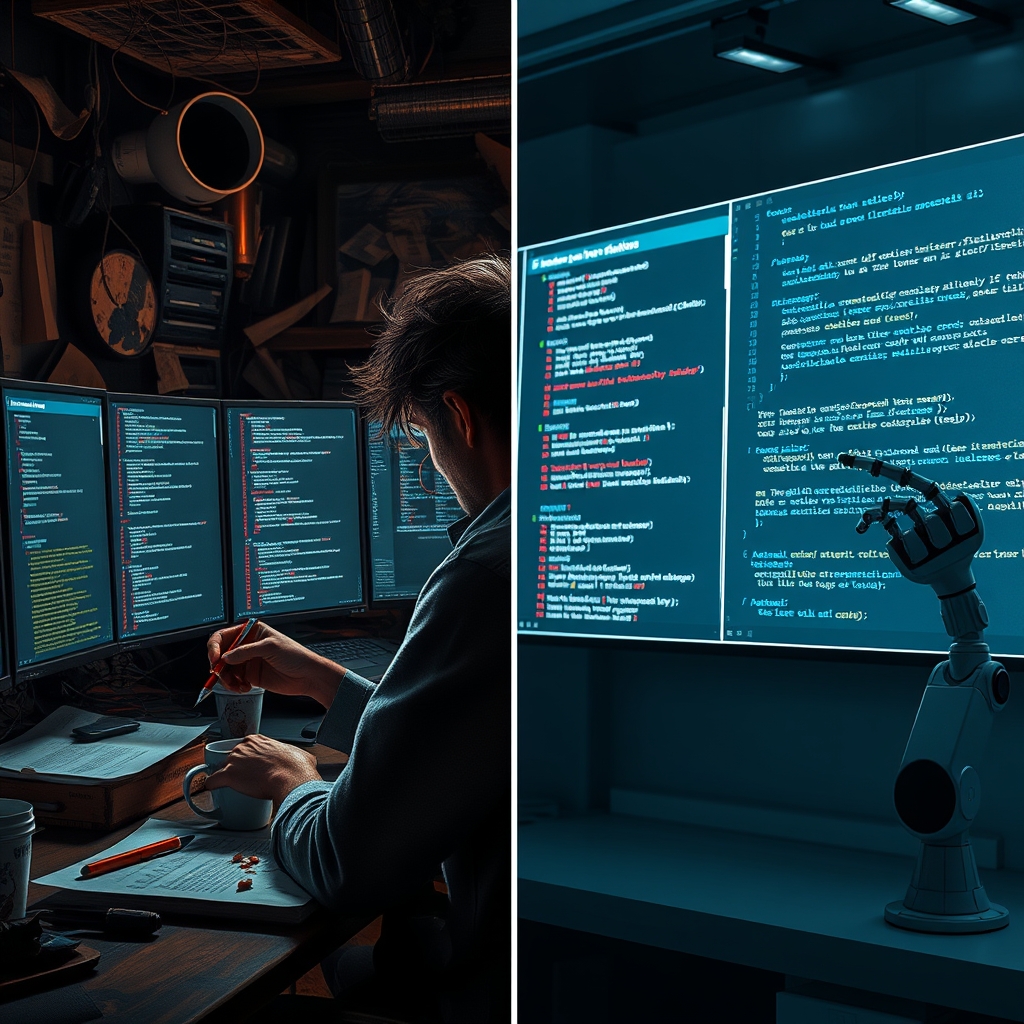

Quick Overview: The Contenders

On one side, we have CodeRabbit v1.8, the latest iteration of a platform that boldly claims to enhance code quality and accelerate review cycles, largely through the mystical arts of AI and automation. It’s the shiny new toy, promising to whisper sweet nothings (or rather, actionable suggestions) into your ear as you navigate the treacherous waters of pull requests. Its core promise is to take the grunt work out of review, leaving humans to focus on the ‘truly important’ architectural debates, or perhaps just their coffee.

On the other side, we have the ‘alternatives.’ This broad category encompasses everything from the built-in review capabilities of platforms like GitHub and GitLab (which, let’s be honest, are often just glorified comment sections attached to a diff viewer), to more specialized, albeit sometimes archaic, dedicated tools like Review Board or Crucible. These are the workhorses, the tried-and-true, the ‘if it ain’t broke, don’t fix it’ brigade, even if ‘it’ often feels a bit wobbly on its last leg.

Feature Comparison: From Manual Drudgery to Automated Enlightenment

CodeRabbit v1.8 truly tries to distinguish itself in the feature department, and frankly, it often succeeds in being less… manual. Its enhanced code review features are where the magic (or at least, the complex algorithms) happens. We’re talking about AI-driven insights that go beyond simple linting. It promises to understand context, identify subtle logical flaws, suggest performance optimizations, and even flag potential security vulnerabilities before your overworked human reviewer has even had their first espresso. The impact on workflow and quality is ostensibly profound: faster reviews, fewer missed issues, and a delightful reduction in the emotional labor of pointing out yet another forgotten null check.

User feedback, as one might expect, often highlights the joy of automated suggestions and the sheer relief of not having to type out the same boilerplate comment for the hundredth time. It’s like having a hyper-efficient, slightly sarcastic junior developer reviewing your code before the senior one even sees it.

The alternatives, bless their hearts, offer a rather more traditional experience. GitHub Pull Requests, for instance, provides a perfectly serviceable diff viewer and a robust commenting system. You can suggest changes, approve, or request more changes. It’s effective, but it relies entirely on human vigilance, expertise, and the willingness to meticulously scrutinize every line. There’s no benevolent AI whispering wisdom; just your own tired eyes and the occasional, passive-aggressive emoji from a colleague. Dedicated tools might offer slightly more structured workflows or metrics, but they rarely venture into the realm of intelligent analysis.

Pricing Comparison: The Cost of Progress (or Laziness)

Here’s where the rubber meets the road, or rather, where your wallet meets the cloud. CodeRabbit v1.8, with its suite of advanced features and AI wizardry, naturally comes with a price tag. It typically operates on a subscription model, often tiered by the number of active users or repositories. For teams drowning in code and desperate for efficiency, this cost might be justified as an investment in sanity and quality. One might even call it a bargain for the privilege of outsourcing some of the brain-numbing work.

The alternatives, particularly the built-in solutions from GitHub and GitLab, are often ‘free’ in the sense that they’re bundled with your existing platform subscription. Of course, ‘free’ often comes with hidden costs: the countless hours spent on manual reviews, the bugs that slip through the cracks, and the collective existential dread of your development team. So, while the direct monetary cost is lower, the opportunity cost and the cost in developer morale can be astronomical. Dedicated tools might have their own licensing fees, which can range from reasonable to ‘are you serious?’ depending on the vendor.

Ease of Use: From Intuitive to ‘Is This Even English?’

CodeRabbit v1.8 generally prides itself on ease of integration and an intuitive user experience. It aims to slot seamlessly into existing Git workflows (GitHub, GitLab, Bitbucket), often appearing as another helpful bot in your PR comments. The learning curve for its core functionalities is touted as minimal, allowing teams to adopt it without needing a week-long training seminar. One might almost believe they designed it for actual developers, not just AI researchers.

The alternatives offer a mixed bag. GitHub’s and GitLab’s review interfaces are, by now, familiar to most developers. They are straightforward, if not particularly exciting. Learning to use them is akin to learning to ride a bicycle – everyone does it, and it gets the job done. More specialized tools, however, can sometimes feel like trying to pilot a space shuttle with a joystick designed for an Atari 2600. Configuration can be a nightmare, and the UI might have been designed by someone who last saw sunlight in the early 2000s.

Performance: The Need for Speed (and Accuracy)

CodeRabbit v1.8 boasts about its ability to deliver rapid feedback. The AI analysis is often near-instantaneous, providing suggestions shortly after a pull request is opened or updated. This speed is crucial for maintaining flow and preventing review bottlenecks. When it works, it’s like having a tireless, omniscient reviewer constantly on standby. The accuracy of its suggestions, of course, is the ultimate test, and v1.8 aims to refine this with each iteration, attempting to minimize the ‘false positives’ that can quickly erode trust.

Performance for the alternatives is largely dictated by human factors. How quickly does your colleague get around to reviewing your code? How many open pull requests are ahead of yours? The speed of feedback is directly proportional to the availability and diligence of your human reviewers. While the platforms themselves are generally performant in terms of loading diffs, the ‘performance’ of the review process itself is a wholly human affair, subject to coffee breaks, meetings, and the occasional existential crisis.

Best Use Cases for Each: Who Should Be Chasing Which Rabbit?

CodeRabbit v1.8:

- Large, Busy Teams: Drowning in pull requests and seeking to accelerate review cycles without sacrificing quality.

- High-Quality Standards: Teams where every bug counts, and automated assistance can provide an extra layer of scrutiny.

- Complex Codebases: Where human reviewers might struggle to keep all context in mind, AI can offer consistent analysis.

- Budget for Efficiency: Teams willing to invest in tools that promise to save developer time and improve outcomes.

Alternatives (e.g., GitHub/GitLab Built-ins):

- Small Teams/Startups: Where budget is tight, and the team size allows for more hands-on, synchronous reviews.

- Simpler Projects: Codebases that are less complex and where the overhead of an advanced AI tool might be overkill.

- Preference for Manual Control: Teams that prefer the human element to be entirely in charge, perhaps distrusting AI suggestions.

- Existing Platform Loyalty: Teams deeply embedded in a specific Git platform and content with its native offerings.

Comparison Summary: The Verdict (Sort Of)

- Features: CodeRabbit v1.8 offers advanced AI-driven analysis, automated suggestions, and context awareness. Alternatives provide robust manual review capabilities.

- Pricing: CodeRabbit is subscription-based, an investment in automation. Alternatives are often ‘free’ as part of a platform, with hidden costs in manual effort.

- Ease of Use: CodeRabbit aims for seamless integration and intuitive AI interaction. Alternatives are familiar, but dedicated tools can be cumbersome.

- Performance: CodeRabbit offers rapid, automated feedback. Alternatives rely on human reviewer availability and speed.

So, which rabbit should you chase? If your team is perpetually buried under a mountain of pull requests, if the quality of your code is paramount, and if you’re willing to invest in a tool that promises to give your developers more time to actually develop (or at least enjoy their lunch), then CodeRabbit v1.8 is certainly worth a serious look. It’s not just a fancy linter; it’s an attempt to elevate the entire code review process from a chore to a more intelligent, collaborative effort. However, if your team is small, your budget is tighter than a drum, and you genuinely enjoy the camaraderie (or passive-aggressive banter) of a purely human-driven review process, then the tried-and-true alternatives will continue to serve you adequately. Just remember to bring extra coffee.