CodeRabbit v1.8: Is Your Code Review Finally Getting a Brain, or Just Another Expensive Toy?

Ah, the eternal quest for pristine code, a journey fraught with peril, procrastination, and the occasional passive-aggressive comment on a pull request. In this modern age of software development, where every byte is sacred and every deadline is yesterday, the humble code review has emerged as both a necessary evil and a potential bottleneck. Enter CodeRabbit v1.8, sashaying onto the scene with promises of AI-powered enlightenment. But does it truly elevate us from the primordial ooze of manual scrutiny, or is it merely another shiny bauble in the ever-expanding toolkit of developer distractions? Let’s peel back the layers of marketing hype and compare this digital marvel to its more, shall we say, ‘traditional’ counterparts.

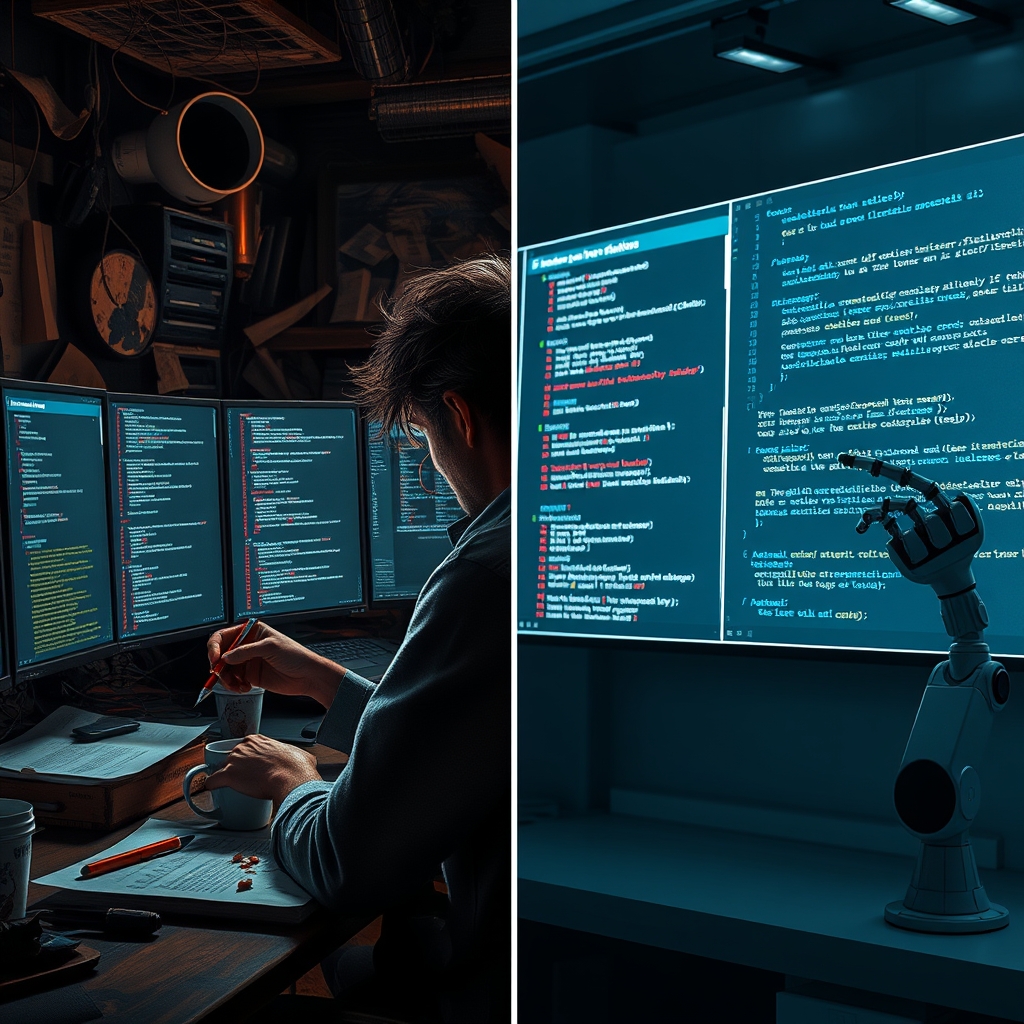

Quick Overview: The AI Savior vs. The Human Grind

CodeRabbit v1.8 positions itself as the sophisticated, AI-driven co-pilot for your code review process. It’s designed to automate the mundane, highlight the critical, and generally make you feel like you’ve outsourced your most tedious mental labor to a silicon savant. Think of it as that annoyingly smart intern who actually *reads* all the documentation. Its core appeal lies in its ability to generate contextual summaries, suggest improvements, and even catch those elusive nits that your bleary-eyed senior developer might miss at 3 AM. It’s for those who believe their time is worth more than endless scrolling through diffs.

On the other side of the ring, we have the ‘alternative solutions’ – a broad category ranging from the venerable, battle-tested human eyeball (often accompanied by a strong cup of coffee and a growing sense of existential dread) to basic static analysis tools that scream about missing semicolons but offer little in the way of insightful architectural feedback. These alternatives are cheap, sometimes even ‘free,’ but they come with a hidden cost: your sanity, your team’s velocity, and the occasional production bug that could have been caught if someone hadn’t been too busy explaining why a variable name should be customerOrderDetailsList instead of codl.

Feature Comparison: The Brains vs. The Brawn

CodeRabbit v1.8’s Enhanced Code Review Features

CodeRabbit v1.8 boasts an impressive array of enhancements that promise to transform your code review experience from a chore into, well, a slightly less annoying chore. Its headline feature is the AI-driven contextual review, which purports to understand the *intent* behind the code changes, not just the syntax. It generates concise summaries of pull requests, making it easier for reviewers to grasp the ‘what’ and ‘why’ without deciphering hieroglyphics. Automated suggestions for improvements, bug detection, and even security vulnerabilities are par for the course, all delivered with an air of detached algorithmic superiority. It integrates seamlessly with popular Git platforms, meaning you don’t even have to leave your comfortable IDE to be judged by an AI. User feedback often highlights the sheer *volume* of helpful suggestions, though some cynics might argue it’s just telling them what they already knew, but faster.

Alternative Solutions: The Manual Meanderings

The alternatives? They offer the raw, unfiltered experience of human interaction. You get to read every line, every character, and then formulate your feedback, often in prose that would make a Victorian novelist blush. Basic diff tools show you what changed, but rarely *why* it changed or what the implications are. Static analysis tools are great for catching low-hanging fruit (and often yelling about style guides), but they lack the semantic understanding that CodeRabbit claims to possess. The ‘enhancement’ here is often the reviewer’s own accumulated wisdom (or biases), applied with varying degrees of consistency depending on their caffeine intake. The impact on workflow is, predictably, directly proportional to the number of lines changed and the reviewer’s current mood. Quality? Well, that’s entirely dependent on the human behind the keyboard, which, as we all know, is a rather unreliable variable.

Pricing Comparison: The Investment vs. The Illusion of Free

CodeRabbit v1.8, like any advanced piece of technology, comes with a price tag. It’s not free, because artificial intelligence, it turns out, isn’t powered by good vibes alone. The pricing structure typically scales with usage, meaning the more code you feed it, the more you pay. This is positioned as an investment in efficiency, quality, and ultimately, developer happiness (a concept often valued more in theory than in practice). For teams drowning in pull requests, the ROI argument is compelling: spend a bit more on CodeRabbit, save countless developer hours, and potentially avoid costly production errors.

The alternatives, particularly manual review, often appear ‘free’ at first glance. After all, your developers are already on salary, right? Wrong. The true cost lies in the time spent context-switching, the delays in merging, the inevitable human errors, and the sheer mental fatigue that leads to burnout. Basic static analyzers might have free tiers or open-source versions, but they offer a fraction of CodeRabbit’s intelligence. So, while the immediate monetary outlay for alternatives might be zero, the long-term financial drain on productivity and quality can be astronomical – a classic case of ‘penny wise, pound foolish,’ but with more lines of code.

Ease of Use: Plug-and-Play vs. The Human Element

CodeRabbit v1.8 prides itself on its seamless integration and user-friendly interface. Hook it up to your Git repository, and it just… works. The AI-generated summaries and comments appear directly in your PR discussions, feeling almost native. There’s minimal setup, no complex configurations, just the sweet hum of automation. User feedback suggests that developers quickly adapt to its suggestions, often finding them intuitive and actionable.

Ease of use for alternatives is, shall we say, ‘variable.’ Manual review requires human beings to, well, *be human*. This involves reading, understanding, typing, and often, arguing. The ‘interface’ is your Git provider’s UI, and the ‘configuration’ is whatever unwritten social contract exists within your team about acceptable nitpicking. Static analysis tools often require configuration files, build step integration, and then the delightful task of sifting through thousands of warnings, many of which are false positives. It’s easy in the sense that you already know how to type, but difficult in the sense that you still have to *think*.

Performance: The Speed Demon vs. The Snail’s Pace

CodeRabbit v1.8 claims to significantly accelerate the code review process. By automating initial passes and providing succinct summaries, it allows human reviewers to focus on higher-level architectural concerns and critical logic, rather than chasing misplaced commas. This can drastically reduce review cycles, leading to faster merges and quicker deployments. The impact on quality is ostensibly positive, as more eyes (both human and AI) catch more issues.

The performance of traditional code review is notoriously sluggish. It’s dependent on reviewer availability, their current workload, their cognitive load, and whether they’ve had their morning coffee. A complex PR can sit for days, accumulating stale branches and blocking progress. While a thorough human review can catch nuanced issues, the sheer time investment often means corners are cut, leading to a trade-off between speed and quality that often favors neither.

Best Use Cases for Each: When to Splurge, When to Suffer

CodeRabbit v1.8’s Sweet Spot

- High-volume repositories: If your team is churning out pull requests faster than a caffeinated squirrel stockpiling nuts, CodeRabbit can be a godsend, providing an initial sanity check and speeding up the overall review process.

- Distributed teams: When time zones and communication barriers make synchronous reviews a nightmare, CodeRabbit offers consistent, asynchronous feedback.

- Teams focused on consistency and quality: For environments where adherence to best practices and minimizing technical debt are paramount, CodeRabbit acts as an ever-vigilant guardian.

- Those who value developer time: If you believe your highly-paid engineers should be solving complex problems, not hunting for typos, CodeRabbit makes a strong case.

When to Embrace the ‘Alternatives’

- Tiny, hobby projects: If your codebase fits on a napkin and your team consists of you and your cat, CodeRabbit might be overkill.

- Teams with absolutely zero budget for tools: When every penny is allocated to instant ramen and server costs, manual review is your only (painful) option.

- Projects where ‘good enough’ is truly good enough: If the consequences of a bug are merely a shrug and a quick hotfix, perhaps the meticulousness of AI isn’t required.

- Teams who genuinely enjoy the intellectual sparring of manual review: Some people just like to argue about code. Who are we to judge?

Comparison Summary: The Verdict (Sort Of)

- Features: CodeRabbit v1.8 offers sophisticated, AI-driven contextual analysis and automation; alternatives provide raw human judgment and basic tooling.

- Pricing: CodeRabbit requires an investment for efficiency; alternatives are ‘free’ but incur significant hidden costs in time and quality.

- Ease of Use: CodeRabbit is integrated and largely hands-off; alternatives demand active human engagement and tool configuration.

- Performance: CodeRabbit accelerates review cycles and aims for consistent quality; alternatives are subject to human variability and often slower.

So, how do you choose? If your team is drowning in code, constantly battling review bottlenecks, and values its collective sanity (and budget for actual development, not just endless review cycles), then CodeRabbit v1.8 isn’t just an upgrade; it’s potentially a lifeline. It offers a tangible path to greater efficiency and a more consistent code quality baseline, freeing up your human reviewers for the truly complex, strategic discussions. However, if your projects are small, your budget is nonexistent, or you simply revel in the anachronistic charm of manually sifting through every single line of code, then by all means, stick to the tried-and-true (and painfully slow) methods. Just don’t come crying to us when your production environment spontaneously combusts because someone missed a null pointer exception.